Waxell is the governance and observability layer for AI agents — whether you built them yourself or your team just started using Claude Code last Tuesday.

I build agents

I manage teams that use agents

Free to start. 2-line setup.

SOC 2 Ready

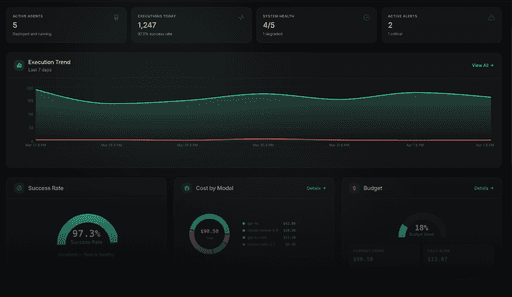

"What are our agents doing?"

With no visibility into tool calls, costs, decisions, or failures. When something breaks, you find out afterward — if at all.

"How do we control them?"

No policy enforcement. No approval workflows. No audit trail. The agent did what the agent wanted to do.

"How do we run them safely at scale?"

No governed execution environment for high-stakes automation. Hope is not an operations strategy.

Waxell answers all three — across agents you didn't build and agents you're running in production.

If you write agent code, Waxell Observe gives you production-grade observability and governance from the first run. If your team runs agents you didn't build — Claude Code, Cursor, custom GPT workflows — Waxell Connect brings them into a governed workspace with zero code changes.

Companies aren't debating whether to deploy agents. They're already deployed. The governance conversation starts 6–12 months later. Waxell needs to be in the building before that conversation begins.

For a full feature-by-feature breakdown against LangSmith, Datadog LLM Obs, Arize, and MintMCP, see the Waxell comparison page.

Shadow AI is the new shadow IT.

Developers are running Claude Code, Cursor, and custom agents with no organizational oversight.

Regulation is arriving.

EU AI Act enforcement is underway. US executive orders on AI safety are driving enterprise compliance requirements.

The cost surprises are real.

Companies are finding $50K/month LLM bills with no attribution.

MCP adoption is accelerating.

Anthropic, OpenAI, and Google have all adopted MCP.

Waxell is built to grow with your deployment. Each product delivers value on its own — and the path to the next one is direct.

vs. Datadog / New Relic

vs. LangSmith

LangChain-only. If your stack goes beyond it — and most do — LangSmith goes dark. Waxell sees everything.

vs. Building it yourself

Logging is table stakes. Governance isn't. A 25+ category policy engine with compliance profiles, human-in-the-loop approvals, PII scanning, and full cost attribution across a patent-pending runtime platform is months of engineering — before the UI, the audit trail, or multi-tenant isolation.

vs. "We'll add governance later"

Companies that skip governance during initial deployment spend significantly more fixing it later. Connect makes "early" mean "today, with no code changes.